Academic homepage of Andreas van Cranenburgh

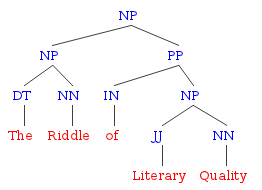

I am an assistant professor in digital humanities and information sciences at the University of Groningen and a member of the CLCG computational linguistics group. Previously I was a postdoc at Heinrich Heine Universität Düsseldorf in the Beyond CFG project, and a PhD candidate in the project The Riddle of Literary Quality. My research areas are computational linguistics and computational humanities, with a particular focus on literature and coreference.

Mail: a.w.van.cranenburgh@rug.nl

Code: https://github.com/andreasvc

and https://gist.github.com/andreasvc/

Profiles: Google Scholar;

Semantic Scholar;

ACL Anthology;

DBLP;

ORCiD.

Education

- PhD in Computational Linguistics (2016), University of Amsterdam. PhD thesis: Rich statistical parsing and literary language (revised version; errata).

- MSc. in Logic (2011), University of Amsterdam. MSc. thesis: Discontinuous Data-Oriented Parsing through Mild Context-Sensitivity. (code).

- BSc. in Artificial Intelligence (2009), University of Amsterdam. BSc. thesis: Simulating Language Games in the Two Word Stage.

Peer reviewed publications (bibtex)

Ruhi Mahadeshwar, Andreas van Cranenburgh, Tommaso Caselli, Malvina Nissim (2026).

Evaluating the Impact of Source Diversity for RAG in Historical Research.

Proceedings of LREC, pp. 716-734.

https://doi.org/10.63317/4yz9z3uvzasd

Perry van der Zande, Andreas van Cranenburgh, Frank Tsiwah (2026).

From thought to language: Comparing schizophrenia spectrum disorders and Wernicke's aphasia with machine learning and LLMs.

Schizophrenia Research: Cognition 45. 100443.

https://doi.org/10.1016/j.scog.2026.100443 (code)

Noa Visser Solissa, Paul Sheridan, Mikael Onsjö, Andreas van Cranenburgh, Federico Pianzola (2026).

A Classification Benchmark Based on the Literary Theme Ontology.

Journal of Open Humanities Data, 12: 31, pp. 1–16.

https://doi.org/10.5334/johd.480

Noa Visser Solissa, Andreas van Cranenburgh, Federico Pianzola (2025).

Event Detection between Literary Studies and NLP: A Survey, a Narratological Reflection, and a Case Study.

Journal of Computational Literary Studies. 4(1).

https://doi.org/10.48694/jcls.4215

Paschalis Agapitos, Andreas van Cranenburgh (2024).

A Stylometric Analysis of Seneca's disputed plays. Authorship Verification of Octavia and Hercules Oetaeus.

Journal of Computational Literary Studies 3(1), 1-32.

https://doi.org/10.48694/jcls.3919 (code)

Andreas van Cranenburgh, Laura Allen, Serge Sharoff, Karina van Dalen-Oskam (2024).

Computational methods for the analysis of fiction genres.

In: Multidisciplinary Views on Discourse Genre, edited by Stukker, Ninke, et al., Routledge, pp. 135--167.

https://doi.org/10.4324/9781003335603-6 (code)

Andreas van Cranenburgh (2024).

Dutch Strong and Weak Pronouns as a Stylistic Marker of Literariness.

In: Digital Stylistics in Romance Studies and Beyond, edited by Hesselbach, Robert, et al.,

Heidelberg University Publishing, pp. 217–234.

https://doi.org/10.17885/heiup.1157.c19373 (code)

Frank Tsiwah, Anas Mayya, and Andreas van Cranenburgh (2024).

Semantic-based NLP techniques discriminate schizophrenia and Wernicke's aphasia based on spontaneous speech.

Proceedings of the Fifth Workshop on Resources and ProcessIng of linguistic, para-linguistic and extra-linguistic Data from people with various forms of cognitive/psychiatric/developmental impairments @LREC-COLING 2024, pages 1–8.

https://aclanthology.org/2024.rapid-1.1/

Andre Wolters, Andreas van Cranenburgh (2024).

Historical Dutch Spelling Normalization with Pretrained Language Models.

Computational Linguistics in the Netherlands Journal, vol. 13, pp. 147--171.

https://clinjournal.org/clinj/article/view/178 (code)

Antonio Toral, Andreas van Cranenburgh, Tia Nutters (2024).

Literary-adapted machine translation in a well-resourced language pair: Explorations with More Data and Wider Contexts.

In: Computer-Assisted Literary Translation, edited By Andrew Rothwell, Andy Way, Roy Youdale. Routledge.

https://www.routledge.com/Computer-Assisted-Literary-Translation/Rothwell-Way-Youdale/p/book/9781032413006

Joris van Zundert, Andreas van Cranenburgh, Roel Smeets (2023).

Putting Dutchcoref to the Test: Character Detection and Gender Dynamics in Contemporary Dutch Novels.

Computational Humanities Research conference, pp. 757-771.

https://ceur-ws.org/Vol-3558/paper9264.pdf

Noa Visser Solissa, Andreas van Cranenburgh (2023).

A Distant Reading of Gender Bias in Dutch Literary Prizes.

Digital Humanities Benelux journal, vol. 5.

https://journal.dhbenelux.org/wp-content/uploads/2023/09/DH_Benelux_Journal_Volume_5_3_Visser.pdf

Andreas van Cranenburgh, Frank van den Berg (2023).

Direct Speech Quote Attribution for Dutch Literature.

Proceedings of LaTeCH-CLfL, pp. 45--62.

https://aclanthology.org/2023.latechclfl-1.6/

Andreas van Cranenburgh, Gertjan van Noord (2022).

OpenBoek: A Corpus of Literary Coreference and Entities with an Exploration of Historical Spelling Normalization.

Computational Linguistics in the Netherlands Journal, vol. 12, pp. 235--251.

https://clinjournal.org/clinj/article/view/157 (data)

Andreas van Cranenburgh, Erik Ketzan (2021).

Stylometric Literariness Classification: the Case of Stephen King.

Proceedings of LaTeCH-CLfL, pp. 189--197.

https://aclanthology.org/2021.latechclfl-1.21 (code)

Andreas van Cranenburgh, Esther Ploeger, Frank van den Berg, Remi Thüss (2021).

A Hybrid Rule-Based and Neural Coreference Resolution System with an Evaluation on Dutch Literature.

Proceedings of CRAC workshop, pp. 47--56.

https://aclanthology.org/2021.crac-1.5 (code/models)

Severi Luoto and Andreas van Cranenburgh (2021).

Psycholinguistic dataset on language use in 1145 novels published in English and Dutch.

Data in Brief, 34, https://doi.org/10.1016/j.dib.2020.106655

Corbèn Poot, Andreas van Cranenburgh (2020).

A Benchmark of Rule-Based and Neural Coreference Resolution in Dutch Novels and News.

Proceedings of CRAC workshop, pp. 79--90.

https://aclanthology.org/2020.crac-1.9/ (models, slides)

Andreas van Cranenburgh, Corina Koolen (2020).

Results of a Single Blind Literary Taste Test with Short Anonymized Novel Fragments.

Proceedings of LaTeCH-CLfL, pp. 121--126.

https://aclanthology.org/2020.latechclfl-1.14/ (code, poster)

Wietse de Vries, Andreas van Cranenburgh, Malvina Nissim (2020).

What's so special about BERT's layers? A closer look at the NLP pipeline in monolingual and multilingual models.

Findings of EMNLP, pp. 4339--4350.

https://aclanthology.org/2020.findings-emnlp.389

(code)

Stephan Tulkens, Andreas van Cranenburgh (2020).

Embarrassingly Simple Unsupervised Aspect Extraction.

Proceedings of ACL, pp. 3182-3187.

https://aclanthology.org/2020.acl-main.290

(code)

Andreas van Cranenburgh (2020).

An Empirical Evaluation of Sentiment Analysis on Movie Scripts.

DH Benelux 2020. https://zenodo.org/record/3862158 (slides)

Corina Koolen, Karina van Dalen-Oskam, Andreas van Cranenburgh, Erica Nagelhout (2020).

Literary quality in the eye of the Dutch reader: The National Reader Survey.

Poetics, vol. 79, https://doi.org/10.1016/j.poetic.2020.101439

Andreas van Cranenburgh (2019).

A Dutch coreference resolution system with an evaluation on literary fiction.

Computational Linguistics in the Netherlands Journal, vol. 9, pp. 27-54.

https://clinjournal.org/clinj/article/view/91

(code; errata)

Andreas van Cranenburgh, Corina Koolen (2019).

The Literary Pepsi Challenge: intrinsic and extrinsic factors in judging literary quality.

Digital Humanities 2019, Utrecht, The Netherlands, 9-12 July.

http://andreasvc.github.io/dh2019.pdf

Andreas van Cranenburgh, Karina van Dalen-Oskam, Joris van Zundert (2019).

Vector space explorations of literary language.

Language Resources & Evaluation. vol. 53, no. 4, pp. 625-650

https://doi.org/10.1007/s10579-018-09442-4

(code)

Tatiana Bladier, Andreas van Cranenburgh, Kilian Evang, Laura Kallmeyer, Robin Möllemann, Rainer Osswald (2018).

RRGbank: a Role and Reference Grammar Corpus of Syntactic Structures Extracted from the Penn Treebank.

Proceedings of Treebanks and Linguistic Theories, pp. 5-16.

http://www.ep.liu.se/ecp/155/003/ecp18155003.pdf

Andreas van Cranenburgh (2018).

Cliche expressions in literary and genre novels.

Proceedings of LaTeCH-CLfL workshop.

http://aclanthology.org/W18-4504

(code)

Andreas van Cranenburgh (2018).

Active DOP: A constituency treebank annotation tool with online learning.

Proceedings of COLING 2018 demonstrations track.

http://aclanthology.org/C18-2009

(code)

Tatiana Bladier, Andreas van Cranenburgh, Younes Samih, Laura Kallmeyer (2018).

German and French Neural Supertagging Experiments for LTAG Parsing.

ACL 2018 student research workshop.

http://aclanthology.org/P18-3009

Corina Koolen, Andreas van Cranenburgh (2018).

Blue eyes and porcelain cheeks: Computational extraction of physical

descriptions from Dutch chick lit and literary novels.

Digital Scholarship in the Humanities, vol. 33, no. 1, pp. 59–71.

https://academic.oup.com/dsh/article/3091837

Corina Koolen, Andreas van Cranenburgh (2017).

These are not the Stereotypes You are Looking For: Bias and Fairness in Authorial Gender Attribution.

Proceedings of the First Ethics in NLP workshop, pp. 12-22.

http://aclanthology.org/W17-1602

(notebook)

Andreas van Cranenburgh, Rens Bod (2017).

A Data-Oriented Model of Literary Language.

Proceedings of EACL, pp. 1228-1238.

http://aclanthology.org/E17-1115

(code;

slides;

Q&A)

Andreas van Cranenburgh, Remko Scha, Rens Bod (2016).

Data-Oriented Parsing with Discontinuous Constituents and Function Tags.

Journal of Language Modelling, vol. 4, no. 1, pp. 57-111.

http://dx.doi.org/10.15398/jlm.v4i1.100

(code;

grammars)

Kim Jautze, Andreas van Cranenburgh, Corina Koolen (2016).

Topic Modeling Literary Quality.

Digital Humanities 2016, Krakow, Poland, 11-16 July.

http://andreasvc.github.io/dh2016.pdf

Andreas van Cranenburgh (2016).

Machine Learning Literature using Textual Features.

Tiny Transactions on Computer Science, vol. 4.

http://tinytocs.ece.utexas.edu/papers/tinytocs4_paper_cranenburgh.pdf

Andreas van Cranenburgh, Corina Koolen (2015).

Identifying Literary Novels with Bigrams.

Proceedings of the Fourth Workshop on Computational Linguistics for Literature, pp. 58-67.

http://aclanthology.org/W15-0707

(poster)

Federico Sangati, Andreas van Cranenburgh (2015).

Multiword Expression Identification with Recurring Tree Fragments

and Association Measures.

Proceedings of the 11th Workshop on Multiword Expressions, pp. 10-18.

http://aclanthology.org/W15-0902

(slides)

Andreas van Cranenburgh (2014).

Extraction of Phrase-Structure Fragments with a Linear Average Time Tree Kernel.

Computational Linguistics in the Netherlands Journal, vol. 4, pp. 3-16.

https://clinjournal.org/clinj/article/view/36

Dirk Roorda, Gino Kalkman, Martijn Naaijer, Andreas van Cranenburgh (2014).

LAF-Fabric: a data analysis tool for Linguistic Annotation Framework

with an application to the Hebrew Bible.

Computational Linguistics in the Netherlands Journal, vol. 4, pp. 105-120.

https://clinjournal.org/clinj/article/view/44

Andreas van Cranenburgh, Rens Bod (2013).

Discontinuous Parsing with an Efficient and Accurate DOP Model.

Proceedings of the International Conference on Parsing Technologies,

Nara, Japan, 27-29 November.

http://aclanthology.org/W13-5701

(slides;

code;

notes).

Kim Jautze, Corina Koolen, Andreas van Cranenburgh, Hayco de Jong (2013).

From high heels to weed attics: a syntactic investigation of chick lit and literature.

Proceedings of the Computational Linguistics for Literature workshop,

Atlanta, Georgia, June 14.

http://aclanthology.org/W13-1410

(slides)

Andreas van Cranenburgh (2012).

Literary authorship attribution with phrase-structure fragments.

Proceedings of the Computational Linguistics for Literature workshop,

pp. 59-63.

http://aclanthology.org/W12-2508

(code,

slides,

revised paper—includes results on Federalist papers).

Andreas van Cranenburgh (2012).

Efficient parsing with linear context-free rewriting systems.

Proceedings of the 13th Conference of the European Chapter of the Association for Computational Linguistics (EACL), Avignon, France, April 23–27.

http://aclanthology.org/E12-1047 (poster, errata, corrected version, code).

Maria Aloni, Andreas van Cranenburgh, Raquel Fernández, Marta Sznajder (2012).

Building a Corpus of Indefinite Uses Annotated with Fine-grained Semantic Functions.

The eighth international conference on Language Resources and Evaluation (LREC), Istanbul, Turkey, May 23–25.

http://www.lrec-conf.org/proceedings/lrec2012/pdf/362_Paper.pdf

(corpus)

Andreas van Cranenburgh, Remko Scha, Federico Sangati (2011).

Discontinuous Data-Oriented Parsing: A mildly context-sensitive all-fragments grammar.

Proceedings of the 2nd Workshop on Statistical Parsing of Morphologically-Rich Languages (SPMRL), pages 34–44, Dublin, Ireland, October 6.

http://aclanthology.org/W11-3805

(slides, template for slides, code).

Andreas van Cranenburgh, Galit Sassoon, Raquel Fernández (2010).

Invented antonyms: Esperanto as a semantic lab.

Proceedings of the 26th Annual Meeting of the Israel Association for Theoretical Linguistics (IATL 26).

http://dare.uva.nl/en/record/371912

Reports

Wietse de Vries, Andreas van Cranenburgh, Arianna Bisazza, Tommaso Caselli, Gertjan van Noord, Malvina Nissim (2019).

BERTje: A Dutch BERT Model.

arXiv preprint 1912.09582.

http://arxiv.org/abs/1912.09582

Andreas van Cranenburgh (2012).

Extracting tree fragments in linear average time.

ILLC technical report.

http://dare.uva.nl/en/record/421534

Teaching

- BA/MA courses on data science and programming for humanities and information science students. University of Groningen, 2018--

- Dependency Parsing BSc/MSc course 2017, Heinrich Heine University. Together with Simon Petitjean.

- Digital Humanities BA Hons. course 2015, University of Amsterdam. Together with Corina Koolen.

Talks

- A Dutch coreference resolution system with an evaluation on literary fiction. Invited talk, University of Düsseldorf, November 7th, 2019 (slides).

- Dutch weak and strong pronouns as a stylistic marker of literariness. Digital Stylistics in Romance Studies and Beyond conference. February 27th, 2019. Wuerzburg (slides).

- A DOP Active Learning Prototype. Grammars, Computation & Cognition workshop, SMART Cognitive Science conference. December 6, 2017 (slides).

- Markers of Literary Language. ILLC Midwinter Colloquium. January 15, 2016 (slides).

- Revisiting competence & performance. Workshop 25 years of Data-Oriented Parsing. June 30, 2015 (slides).

- An efficient and linguistically rich statistical parser. Invited lecture at University of Gothenburg, April 16, 2015 (slides).

- Text Mining and Stylometry. Invited lecture at DH crash course, Amsterdam, October 23, 2014 (slides, ipython notebook).

- Data-Oriented Parsing and Discontinuous Constituents. Guest lecture in Unsupervised Language Learning course, University of Amsterdam, March 4, 2014. (slides).

- Linear average time extraction of phrase-structure fragments. Presented at the 24th Computational Linguistics in the Netherlands (CLIN) conference, Leiden, January 17, 2014 (slides).

- Estimating literary readability through lexical & syntactic complexity. Workshop Complexity in Digital Humanities, Meertens, Amsterdam, November 7th, 2013 (slides).

- Discontinuous Data-Oriented Parsing using Coarse-to-Fine methods. Invited talk, University of Düsseldorf, November 29th, 2012. (slides).

Academic service

- Reviewer ACL 2013, EMNLP 2014, NAACL 2018, 2019, etc.

- Organizer CLIN 29